The solution sets of the two problems intersect at a single point which solves both problems. The solution to the Ma圎nt problem therefore can be found to by solving the maximize likelihood estimation given the observations x1, …, xm:Īs it turns out, maximizing entropy is a dual problem of maximum likelihood. According to the principle of maximum entropy, if nothing is known about a distribution except that it belongs to a certain class (usually defined in terms of specified properties or measures), then the distribution with the largest entropy should be chosen as the least-informative default. Where μ1, …, μk are the parameters and Z is the partition function. With the aid of Lagrange multipliers, we can derive that the solution to the Ma圎nt problem is in the form of a Gibbs distribution:

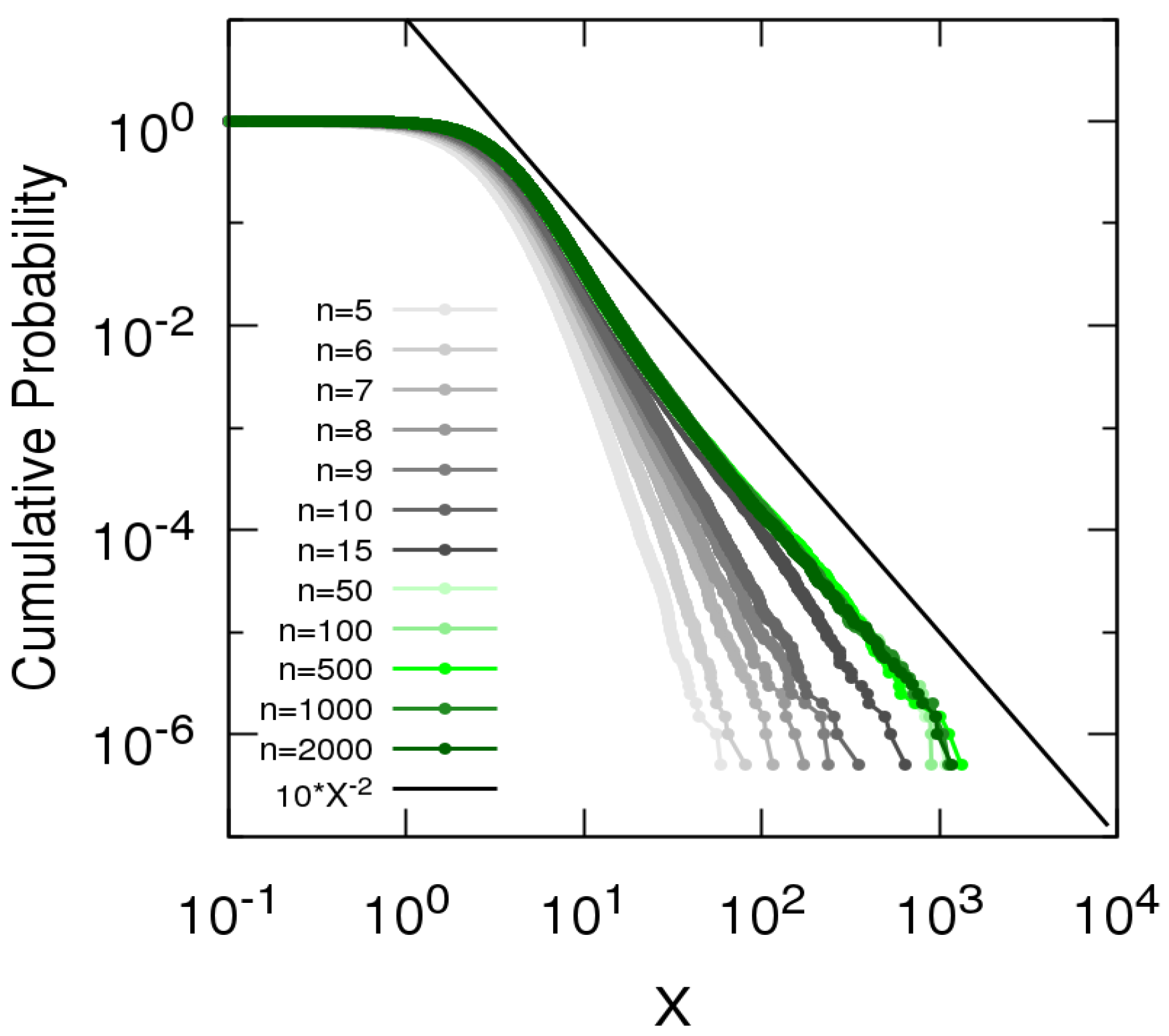

The maximization of Shannon entropy subject to the shifted geometric mean constraints leads to a probability distribution in terms of Hurwitz zeta function. A certain scaling range of rank-size distribution is derived. The hierarchical scaling law implies Zipf’s law. A power law with an exponent equal to 1 is derived from global entropy maximization. In the next section, we will discuss how to solve the maximum entropy problem. On maximizing Tsallis entropy with non-extensive parameter q, power law distributions are obtained which portrays the well-known Shannon family of exponential distribution as, \(q \to 1\). Highlights A pair of exponent laws is derived by postulating local entropy maximization. In practice, such expectation constraints can usually be obtained from an empirical distribution, For instance, the expectation over the empirical distribution in this example can be obtained by rolling the dice multiple times and computing the average. Where the expected value of the obtained number is an expectation constraint of this optimization problem. The additional knowledge we know about the dice is that the expected number for each roll is:Īccording to the maximum entropy principle, we can estimate the probability of obtaining each number, p, by solving the maximum entropy problem: To give an example of such problems, let us assume we have a biased dice and we want to estimate the probability of obtaining number 1, 2, …, 6 each time we roll.

and ( fj, bj) an (linear) expectation constraints on p. The Maximum Entropy (Ma圎nt) problem is formalized as follows: Unlike the wide-used Maximize Likelihood (ML) estimation, the maximum entropy estimation is less frequently seen in solving machine learning problems. Maximizing likelihood given parameterized constraints on the distributionĪre convex duals of each other.Maximizing entropy subject to expectation constraints.The Maximize Entropy and Maximize Likelihood duality states that the two problems:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed